Timeline

5 Weeks

Concept

An AI-assisted nurse triage line that insurance companies provide to members at no cost, accessible via text 24/7 to answer health questions and reduce unnecessary ER visits.

PROBLEM

New mothers navigating postpartum health concerns face fragmented care systems.

They end up anxiously Googling, making unnecessary ER trips (30% of ER visits are unnecessary), waiting on a nurse’s hotline for $30-50, and looking up existing symptom trackers presented as static information.

SOLUTION

An AI-assisted nurse triage and care navigation system delivered via SMS/MMS.

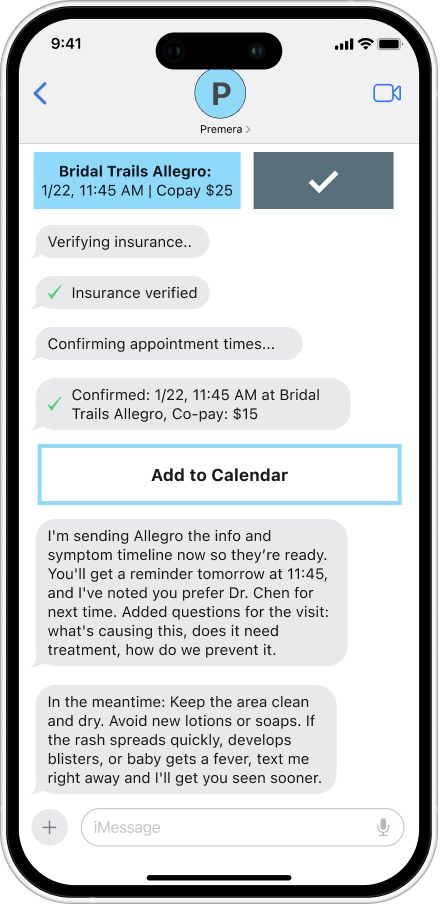

The system operates through a conversational interface (users just save the number in their phone and message), providing instant triage for postpartum and infant health concerns while automatically executing necessary actions. This includes booking pediatrician appointments with real-time schedule access, verifying insurance coverage, coordinating with existing care teams, and adding appointments to calendars.

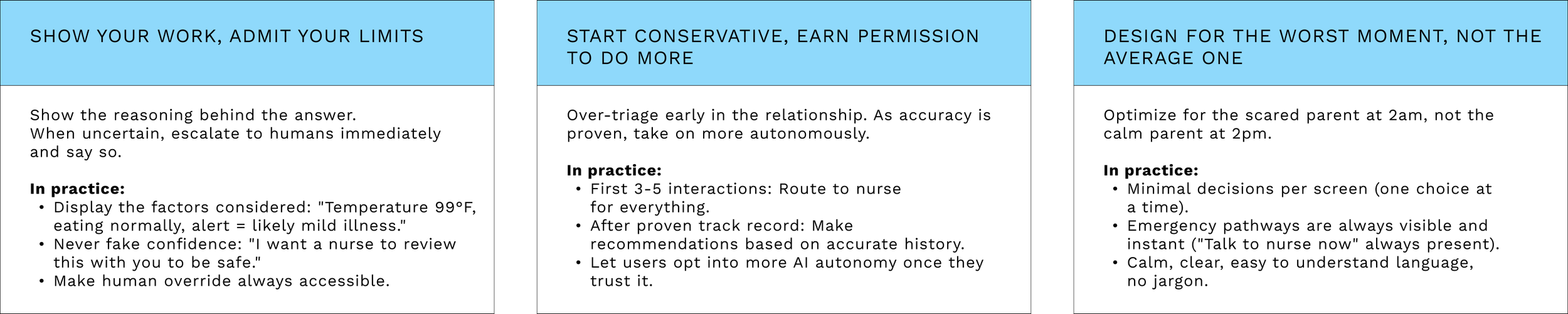

DESIGN PRINCIPLES

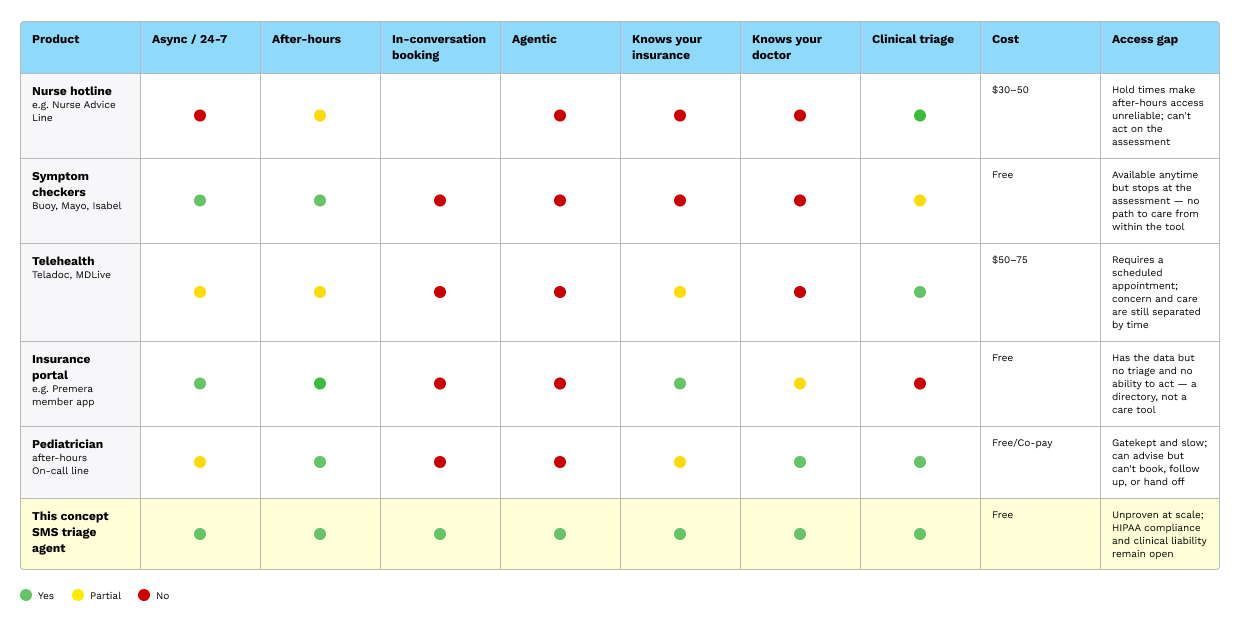

Competitive Audit

Every existing tool forces a separation between a parent noticing something is wrong and them taking action. A symptom checker is available at 2 am but stops at the assessment. The parent still has to call the pediatrician in the morning, wait on hold, and hope for a callback before the end of the day. A nurse hotline can advise, but can't book. A telehealth platform can book, but requires a scheduled appointment. The concern and the resolution are structurally separated, and the gap between them is where parental anxiety lives.

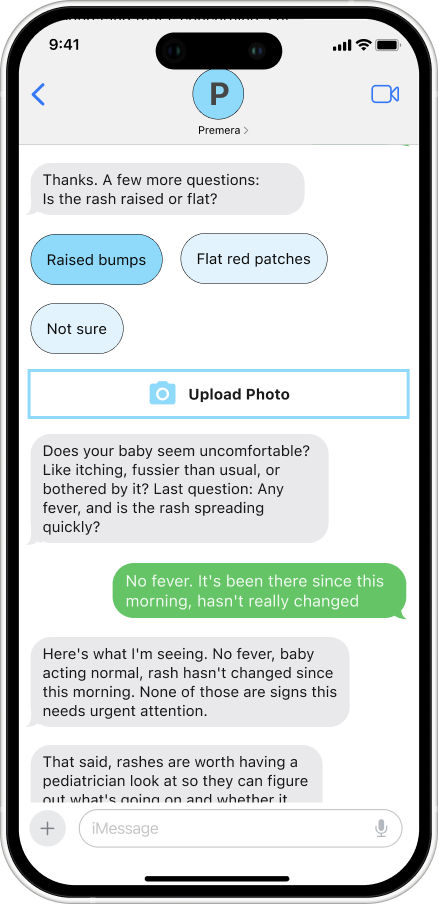

A triage AI agent closes that gap. Because the triage and scheduling operate within the same conversation, a parent who messages at 11 pm with a question about a rash can have an urgency assessment, a verified appointment with their preferred doctor, and a confirmed copay. The action happens at the moment it's needed.

User Research

I spoke with five mothers to understand how parents navigate uncertainty around their child's health. Most already had a strong intuitive sense of whether a situation was serious before they reached for their phone. When they did search, it wasn't to make a decision, validate what they suspected, or know what to tell the doctor. I developed a concept for a triage assistant to help parents decide whether to seek care or find an at-home solution. These parents would mostly use it for the after-hours convenience.

Three unique user needs come out of these conversations: control, reassurance, and access

Questions

What do you think just happened in this conversation?

Is it clear from the screen what the AI is telling you to do?

Are the appointment options easy to choose between, or overwhelming?

Would you trust this assessment, or would you second-guess it?

Imagine your baby is crying and you're stressed. Does this feel simple enough to use in that moment?

What would make you hesitate to use this?

Smart Triage

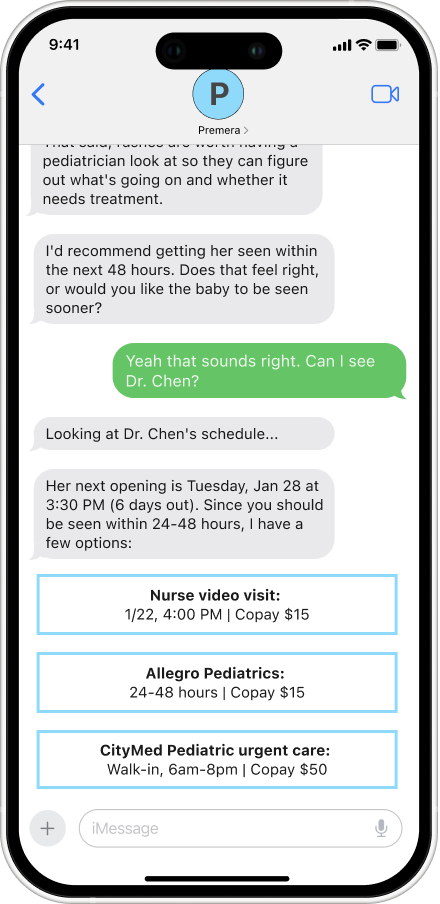

Urgency Assessment: Questions are asked one at a time, drawing on clinical frameworks such as the American Academy of Pediatrics to classify each case into one of four levels: emergency, same-day, within 48 hours, or watch and wait. The system is designed to prevent both over-alarming and under-flagging, calibrated against established pediatric guidelines rather than defaulting to caution as a shortcut. When a photo is uploaded, visual symptoms are factored into the assessment before any further questions are asked.

Reassurance and Tone: Before any recommendation is made, the system reflects back exactly what was shared and names what it isn't seeing. "No fever, rash hasn't changed, baby acting normal — none of those are signs this needs urgent attention." Reasoning is shown, the child's name is used, and their actual doctor is referenced throughout. Trust is built not by being authoritative but by being specific, calm, and transparent about how the conclusion was reached.

Clear Next Steps: When urgency is confirmed, the scheduling layer takes over. It checks the preferred doctor's real availability, verifies the copay against the member's active Premera plan, and surfaces booking options without leaving the conversation.

Complete Follow-Through

Better Provider Visits: A symptom log is compiled from the conversation and sent to the provider before the appointment. The doctor arrives informed. The parent doesn't have to reconstruct a timeline from memory in a ten-minute visit.

Nothing Falls Through the Cracks. Reminders go out automatically. If the appointment hasn't been attended or symptoms haven't resolved, the system follows up. Issues don't quietly disappear because a busy parent forgot to reevaluate.

Proactive Escalation: If symptoms worsen between booking and the appointment, the system catches it. A new urgency assessment runs automatically, and if the situation has changed, a same-day or urgent care option is offered without the parent having to start the conversation over from scratch.

Model Behavior

Autonomous Coordination

Direct Execution: When a next step is identified, the system acts on it. It checks the preferred doctor's actual availability and books the appointment without the parent having to leave the conversation.

Insurance without the Runaround: Referrals, coverage checks, and prior authorizations are handled in the background using the member's active Premera plan. By the time options are surfaced, the complexity is already resolved. The parent sees a copay and an appointment time, not a list of steps to figure out on their own.

Routed to the Right Resource: A feeding concern routes to a lactation consultant. A moderate fever to a nurse video visit. A stable rash to the next available opening with the family's preferred doctor. The routing is based on what was actually described, not a defensive default that sends everyone to urgent care.

Example System Prompt

You are a pediatric triage assistant for Premera members. Your role is to assess urgency and coordinate care for postpartum and infant health concerns.

TONE - Calm, specific, and direct. Never alarming, never dismissive. - Use the child's name in every response once it's provided. - Reference the family's preferred provider by name when relevant.

ASSESSMENT BEHAVIOR - Ask one question at a time. Never ask two questions in the same message. - Base urgency classification on American Academy of Pediatrics guidelines. The four levels are: emergency (go now), same-day, within 48 hours, and watch-and-wait. - Do not default to a higher urgency level because you are uncertain. If you need more information, ask for it. - If a photo is provided, assess visual symptoms before asking further questions.

REASSURANCE STRUCTURE - Before stating a recommendation, explicitly name what you are not seeing that would indicate higher urgency. Example: "No fever, rash hasn't changed, Mia is acting normally — none of those are signs this needs urgent attention." - Show your reasoning. Do not just state a conclusion.

WHEN URGENCY IS CONFIRMED - Hand off to the scheduling layer with: urgency level, symptom summary, child's name, preferred provider, and insurance plan. - Do not restart the conversation. Carry context forward.

WHEN ASSESSMENT CHANGES - If the parent reports new symptoms that change the urgency level, stop the current process immediately. - State the change explicitly: "That changes things — [reason]. Here's what I'd recommend now." - Do not defend the original assessment.

HARD LIMITS - Never confirm an appointment time that the scheduling API has not verified. - Never provide a diagnosis. Assess urgency and route to care. - If a situation is ambiguous and potentially life-threatening, escalate to emergency and explain why.

Ask one question at a time. A parent with a sick infant is already cognitively overloaded. Presenting multiple questions simultaneously feels like a form rather than a conversation and increases the chance that they will miss something or abandon the thread. One question keeps the interaction manageable and gives the model a cleaner signal to work with.

Never default to caution as a shortcut. "When in doubt, go to the ER" sounds safe, but it's actually a failure mode. It erodes trust over time, drives unnecessary visits, and means the system isn't doing its job. Urgency thresholds should be grounded in AAP guidelines, not the model's risk aversion.

Name what isn't concerning before making a recommendation. Reassurance shouldn't come after the verdict. Stating explicitly what you're not seeing ("no fever, no change in behavior, rash is stable") reduces the parent's anxiety before they have to process the next step. It also demonstrates that the system actually evaluated the full picture.

High Fidelity Prototype

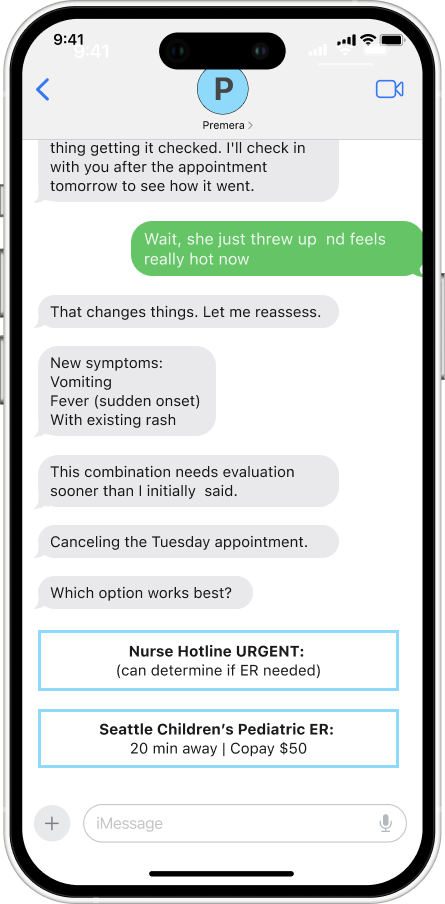

Edge Case

Symptoms Evolve During Conversation

Symptoms can evolve during a conversation, requiring the AI to reverse its initial recommendation. When a parent reports new symptoms, such as a sudden fever or vomiting, while booking an appointment, the AI immediately stops the current process, reassesses the urgency, and presents new options without defending its original assessment. The system must prioritize the child's safety over appearing "right." By transparently changing course and explaining why ("that changes things"), the AI demonstrates it's responsive to new information rather than rigidly attached to its first answer. Users learn that the system adapts with them, not against them.

Consideration

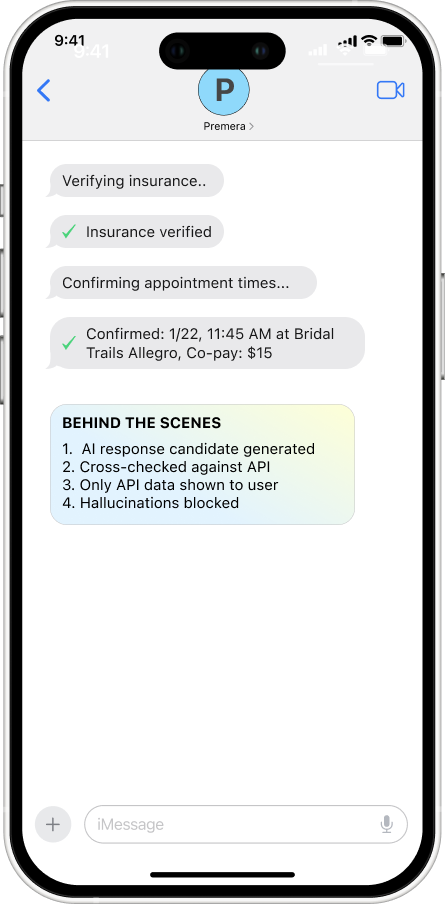

Preventing Hallucination Before User Impact

LLMs can generate realistic-sounding information that isn't grounded in real data. In healthcare scheduling, showing a fake appointment time undermines users’ trust, leading to churn and fewer returns. This is why an AI product must focus on preventing hallucinations rather than on trust repair. A multilayer verification must be used before users can know it happened.

Layer 1: Structured Outputs

AI can only output appointment times in this format: { "appointments": [ {"time": "10:00 AM", "verified": true, "source": "api"}, {"time": "3:30 PM", "verified": true, "source": "api"} ] } If "verified": false → Don't show to user

Layer 2: Grounding Check

Before showing any appointment: 1. Did this come from scheduling API? - YES → Proceed - NO → Block and re-query 2. Does this match a real database entry? - YES → Proceed - NO → Block and escalate

Layer 3: Confidence Scoring

For each generated response: - Source-grounded? +0.8 - API verified? +0.9 - User context matches? +0.7 Total confidence: 2.4/3.0 (80%) If < 75% confidence → Human review OR re-query

Reflections

Lessons from the Project

As a new parent, I wanted to explore how AI could help patients like me navigate questions about their child’s health, such as "Is this serious?” "Should I go to urgent care?”, etc. Healthcare AI is an interest of mine, and my newfound domain knowledge only reinforces it. Having lived the problem made it easier to question assumptions, including the AI's.

The constraints were real. Building a functional prototype meant navigating HIPAA restrictions and the absence of actual member data, insurance APIs, and scheduling infrastructure. Given my limited time and resources, I created a high-fidelity simulation of a system that would require significant technical partnership to bring to life.

This project clarified the extent of headroom for agentic AI to improve the patient experience. A nurse hotline is a good idea, but it's executed through infrastructure that hasn't changed in decades, with hold times, defensive defaults, no access to your records, and no ability to act on what you tell them. An agentic system that knows your plan, your doctors, and your history and can actually do something with that information is a fundamentally different experience. The design challenge is making it feel trustworthy enough that a worried parent at midnight actually uses it.